When it comes to AI and process control, the quality of data is key

Heikki Laurila, Product Marketing Manager, Beamex

Process control is an essential part of manufacturing and industrial operations, ensuring that processes are consistent, efficient, and effective. Process control is based on measurements in the process, so accurate measurements are the basis for good process control. Even more so when artificial intelligence (AI) steps in and there are fewer human interactions.

Traditionally, process control has relied on human operators to monitor and adjust processes, but with the increasing use of automation and digital technologies, AI is becoming an increasingly important tool. It can be used for everything from analysing data from process measurements and other sources to identifying patterns in the data and using them to make decisions about optimising performance.

But all this analysis is moot if the quality and accuracy of the measurement data in not correct to start with. I’ll explain why in just a bit.

The benefits of using AI in process control

First, let’s look at how AI can manage to pull off all these wonders I mentioned earlier.

One of the key benefits of using AI in process control is the ability to analyse large volumes of data in real time. In manufacturing and industrial settings, there are often thousands of sensors and other data sources continuously generating data. With traditional process control methods, it can be difficult for human operators to monitor all this data and make decisions quickly enough to optimise performance. AI can process this data more quickly and accurately, supporting decision-making based on real-time data rather than historical trends or intuition.

Another benefit of using AI in process control is the ability to identify patterns and anomalies that operators might miss. For example, AI algorithms can analyse data from multiple sensors to detect correlations and patterns that might not be immediately obvious to the human eye. This can help identify potential issues before they become critical, allowing them to take corrective action before production is impacted.

Predictive maintenance and real-time optimisation

In process control, this ability can be leveraged to enable predictive maintenance. Predictive maintenance uses data from sensors and other sources to predict when equipment is likely to fail, enabling operators to schedule maintenance proactively rather than waiting until equipment breaks down. This helps avoid unplanned downtime, reduce maintenance costs, and extend the lifespan of the equipment.

AI can also be used to optimise processes in real-time. By analysing data from sensors and other sources, AI algorithms can identify opportunities to improve efficiency and reduce waste. For example, AI can be used to adjust the temperature or pressure of a process to optimise performance or reduce energy consumption. This can lead to significant cost savings and improved performance.

The benefits to the process control sector are clear to see. But, to make all this happen, data quality is the deciding factor.

It’s all based on measurement data quality

Despite the many benefits of using AI in process control, there are also some challenges that need to be addressed. One of the biggest is data quality. AI algorithms rely on high-quality data to make accurate predictions and decisions. If data is inaccurate or inconsistent it can lead to incorrect predictions and decisions. Ensuring data quality is therefore essential when using AI in process control.

We saw this at the Almaraz Nuclear Power Plant (CNA) in Spain, where enhanced equipment performance allowed calibration operations with greatly improved uncertainty levels. As a result, the measurement of parameters used to determine reactor power improved from 2% to a mere 0.4%. This increased accuracy allowed them to run the power plant at a higher output, resulting in a large revenue increase. These precise measurements will be even more critical in the future as the energy sector continues to adopt AI for power generation optimisation.

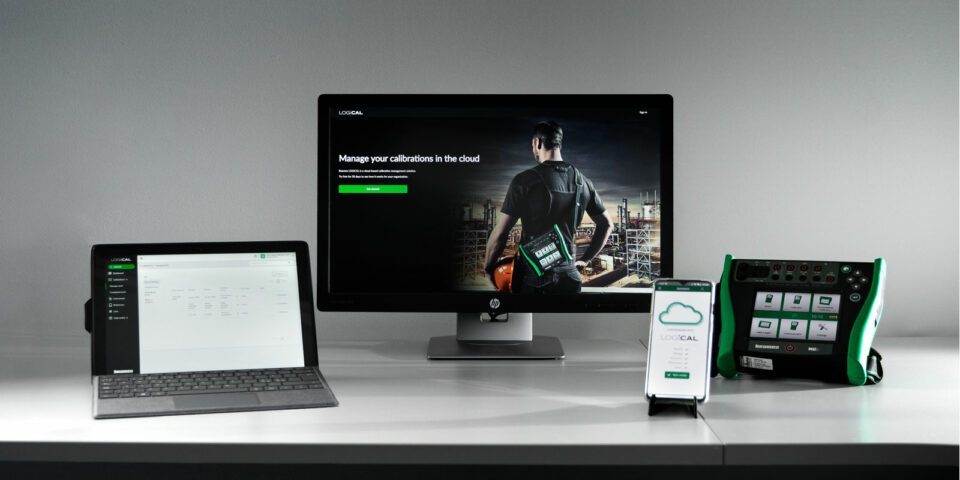

To ensure the high quality of measurement data, proper calibration of process measurements is critical in AI-based process control. It is essential to calibrate all measurements regularly to maintain the accuracy of the data they generate. This highlights the need for the process industry to use more effective calibration processes. The most effective calibration processes are fully paperless, and the calibration data moves digitally throughout the process, ensuring high-quality data and data integrity.

Keep calm and calibrate

There are concerns about the potential impact of AI on jobs. As AI becomes more prevalent in manufacturing and industrial settings, there is a risk that it could replace human workers in some roles. This could lead to job losses and social disruption. However, new jobs and industries will emerge as AI creates new opportunities for innovation and growth. This includes jobs in the field of measurement and calibration, with the need to ensure data accuracy becoming even more critical.

In conclusion, AI-based process control is transforming the way we approach manufacturing and industrial operations. However, to fully realise the benefits of AI-based process control, it is essential to address challenges such as data quality, proper calibration and the need for skilled personnel. By doing so, we can unlock new opportunities for innovation and growth in manufacturing and industry.

The saying “Everything is based on measurements” is even more valid with AI-based process control. The only way to ensure high-quality measurement data in a process plant is by running a high-quality calibration program that ensures all measurement instruments are calibrated regularly, traceably, and with sufficient certainty.

You might also find interesting

For a safer and less uncertain world

Welcome to our series of topical articles where we discuss the impact that accurate measurement and calibration has on the world and our everyday lives.