ChatGPT: A dumb, energy-guzzling AI revelation?

Antonio Matamala, Country Manager, Beamex Germany

ChatGPT is one of the biggest technological revelations of recent times. What impresses me is that this large language model (LLM) is easy to use, making it feel like you’re interacting with another person. It was also impressive how quickly marketing companies began capitalising on this generic AI chatbot to sell “optimisation” for specific purposes. Today, I received an email inviting me to check whether ChatGPT understood our company, products and services and whether it could explain our unique offering.

While they intended to offer consulting services, this specific use of AI reminded me of a technology company that developed an AI-based application to predict the energy consumption of its customer base to understand how much electricity it would need to supply (and buy). The model could predict the next day’s energy consumption in a given district based on the time of day, the type of day, weather information and past patterns. I then speculated how it would process the massive energy consumption of an LLM being trained.

According to Medium, GPT-3, the first model of ChatGPT that was released for public use, had 175 billion machine learning parameters and was trained on 10,000 NVIDIA V100 core GPUs. It is estimated to have consumed 936 megawatt-hours during its training, which is enough energy to power a single electric car to the moon and back more than six times—a journey of more than 5.4 million kilometres. Still, this is overshadowed by the electricity consumption used for crypto mining.

From an environmental point of view, it can’t continue like that, or it’ll be catastrophic. RMI, a non-profit working to transform global energy systems, estimates that Bitcoin alone consumed 127 terawatt-hours a year, putting it at the same level as a small country’s energy use. They speculate that cryptocurrency activities in the US emit 25-50 million tonnes of CO2 a year.

Ethereum, one of the most popular cryptocurrencies, successfully upgraded its software architecture last September and reduced electrical usage by over 99.9 percent. This positive move put the burden on Bitcoin to change its energy-thirsty approach since it is now responsible for most cryptocurrency emissions.

Nvidia, the largest provider of GPUs and machine learning accelerators, is also under pressure to address sustainability and energy efficiency. This is good because engineers are excellent problem solvers, and AI’s future is just beginning. Ultimately, we can expect more computing power to feed the insatiable demand for computing power, not least because AI and the use of data (think autonomous driving) will continue to increase.

Opening the door to AI predictive maintenance

Essentially, AI is dumb, even though it can give intelligent-looking answers. It recognises patterns and can predict and execute models trained on massive data, making reliable data fundamental. The common denominator between an AI chatbot like ChatGPT and the calibration mindset is being data conscious. Anybody in calibration will tell you it’s not about knowing the Absolute Truth because data has an error margin by default. Accuracy depends on the instrumentation and the data being fed to produce output. This is not different when using ChatGPT.

In this context, I asked ChatGPT if it makes mistakes. This is what it answered:

“As an AI language model, I can make mistakes. However, I have been trained on a vast amount of data and have undergone rigorous testing to minimize errors. Both humans and AI can make mistakes. However, it is worth noting that […] AI can be more accurate than humans in certain tasks, especially those requiring high precision and consistency. For example, AI can perform complex calculations, analyse large amounts of data, and detect patterns or anomalies that might be difficult for humans to identify.”

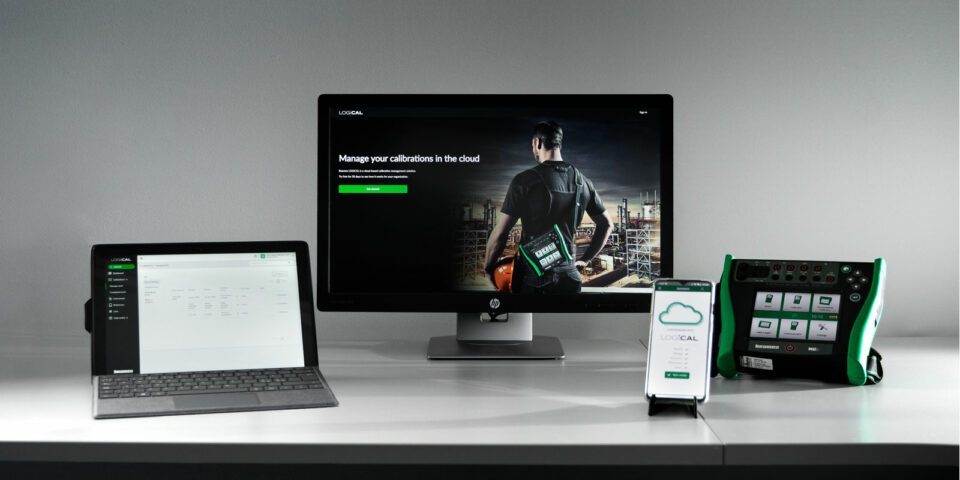

At this point, I had to think about the Beamex value proposition. Beamex solutions ensure our customers can rely on the sensor data they use in their production facilities. Calibration is the only way to ensure that plant managers can trust 100% of the data used to control their production processes. Ideally, a calibration process should occur without human interaction or media disruptions.

A streamlined calibration process where data is captured, processed and exchanged from machine to machine should be completely digital. ChatGPT is sometimes wrong, but manual input work, especially when working with data, can be a source of error in the calibration process with unpredictable consequences. Combined with fatigue after a long day’s work, mistakes are inevitable when manually recording calibration data on paper.

Traditionally, the main objective of a plant manager was to keep everything running. The current mindset around AI and data analytics has triggered a search for value in the data. For example, consider all the assets in a production facility equipped with sensors continually producing data in an Industrial Internet of Things (IIoT) system. By tapping into those data sources, you can feed AI models and search for anomalies, such as detecting whether a pump or motor vibrates strangely. By training a model, you can predict whether it may break within the next 48 hours, allowing the maintenance manager to have a replacement part in stock and reducing downtime.

AI has gone mainstream, and now everybody can use it. The handling, quality, management, access and data processing have moved to the next level. If you have a well-calibrated sensor, you can feed reliable data into an AI model for predictive maintenance, taking you full circle on the value of having accurate data.

You might also find interesting

For a safer and less uncertain world

Welcome to our series of topical articles where we discuss the impact that accurate measurement and calibration has on the world and our everyday lives.